In a couple of recent posts, I argued that random patterns in Conway’s Game of Life tend, on average, to live longest when they have an initial density of 37.5% cells being alive. In this post, rather than looking at the distribution of the average lifespan for various initial densities, I will fix the density at 37.5% and look at the distribution of lifespans that results, and use that to estimate what the maximal lifespan of any small pattern is (as in my previous posts, I will use 20×20 starting patterns). As before, I will explore both the case when we simulate Life on a torus and when we simulate it on an infinite plane.

Lifespan Distribution on a Torus

After simulating 175000 random patterns on a 20×20 torus, the distribution of the lifespans was as follows:

The bins in the graph have a width of 10, and the peak occurs in the lifespan 91 – 100 bin. Some other interesting stats include:

The bins in the graph have a width of 10, and the peak occurs in the lifespan 91 – 100 bin. Some other interesting stats include:

- Maximum observed lifespan: 2092

- Minimum observed lifespan: 14

- Average lifespan: 210

- Median lifespan: 164

- Standard deviation of lifespan: 159.3

In order to try to estimate the longest lifespan of any pattern on a 20×20 torus, I will assume that if the lifespan is above 100, then it follows an exponential distribution, which appears to be a reasonable assumption given the above histogram. Indeed, this leads to the following histogram, which has the exponential of best fit overlaid in black. The equation of the exponential is y = 9088.7(0.938)x, and the R2 value is 0.997 (that’s one heck of a good fit).

Now that we have our exponential of best fit, we can get an estimate of the maximal lifespan in this scenario by assuming that the lifespan of the 220*20 different patterns are independent of each other (this may not be a particularly good assumption, as two patterns, for example, will have the same lifespan if they are translations of each other — nonetheless it seems that the number of reductions that can be made by symmetry arguments like that is tiny compared to the total number of such patterns, so let’s roll with it). We then set

Now that we have our exponential of best fit, we can get an estimate of the maximal lifespan in this scenario by assuming that the lifespan of the 220*20 different patterns are independent of each other (this may not be a particularly good assumption, as two patterns, for example, will have the same lifespan if they are translations of each other — nonetheless it seems that the number of reductions that can be made by symmetry arguments like that is tiny compared to the total number of such patterns, so let’s roll with it). We then set

![]()

and solve for x to get a maximal lifespan of x = 4474. This seems believable and not particularly shocking to me given the statistics that I listed earlier.

Lifespan Distribution on a Plane

Typically, Life enthusiasts are more interested in results on the infinite plane than on a torus, as it leads to behaviour that is much more chaotic and unpredictable. For example, even though we just saw that the longest-lived 20×20 pattern on a torus likely lives for less than 4500 generations, there is a known 9×15 pattern that lives on the plane for over 29000 generations. We will now proceed analogously to the torus discussion above to see how much better we can reasonably expect to do. We begin with the distribution of the lifespan based on 60000 initial 20×20 patterns:

The bins in the graph have a width of 50, and the peak occurs in the lifespan 151 – 200 bin. The other basic stats are listed below, and as we would expect, the average lifespan and the standard deviation of lifespans are both much greater on the plane than they are on the torus.

- Maximum observed lifespan: 13612

- Minimum observed lifespan: 15

- Average lifespan: 678

- Median lifespan: 368

- Standard deviation of lifespan: 885.6

The main difference with the analysis in this situation is that instead of assuming the lifespan itself follows an exponential distribution, I will assume that some power of the lifespan follows an exponential distribution; the reason for this is that the R2 is quite low if we try to fit an exponential to the distribution of the lifespans itself. Fitting an exponential to lifespan0.75 seems to give the maximum R2 value (0.9857), so this is what I will use. The following histogram shows the curve of best fit, which has the equation y = 1854.6(0.957)x, where this time x = lifespan0.75.

Finally, we follow our method from before and set

![]()

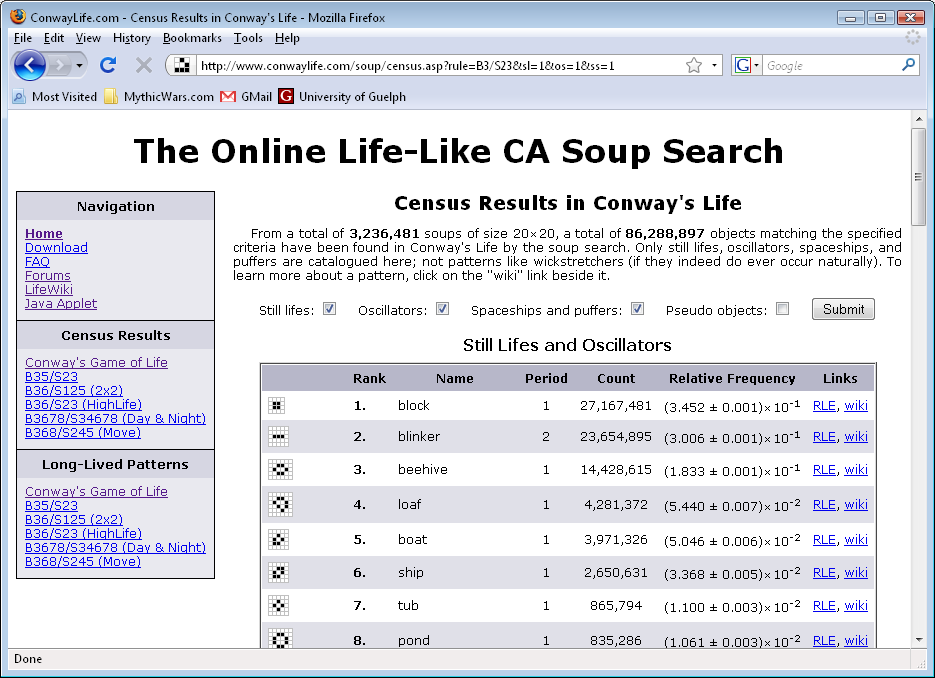

and solve for x to get a maximal lifespan of x = 120795. Of course, this number should be taken with a grain of salt, because that pattern I mentioned earlier that has a lifespan of over 29000 generations was found during a simulation of a whopping 12 billion 20×20 patterns, while our equation of best fit tells us that after 12 billion patterns, we would expect only to see a pattern with lifespan 6206 (when really it should only take about 400 patterns to see such a lifespan). Similarly, in the longest-lived patterns found by the Online Life-Like CA Soup Search, a pattern has been found with lifespan 24389. According to our formula, we should have to look at roughly 9 million billion billion billion soups before finding such a pattern, but only 40 million soups have actually been searched.

This all seems to suggest that the longest-lived pattern in a 20×20 box may in fact live considerably longer than 120000 generations, which goes to show just how in the dark we are when it comes to some of these simple questions — since the search space is so unimaginably huge, we likely will never know for sure what the true upper limit is.

Downloads:

- Lifespan raw data and graphs [.zip of an Excel spreadsheet — 2.91MB]

- Lifespan recorder scripts [.zip of two Golly Python scripts]